The Importance of Liquid Cooling in the Data Center Environment

by Michael Rareshide, on Nov 27, 2018 4:45:36 PM

One would think that mixing liquid products around highly sensitive data center equipment would be a recipe for disaster. But in the data center site selection world, liquid cooling technologies have significantly improved — exceeding the benefits of traditional air-cooled systems. Data center rack power densities are increasing, with a corresponding increase in heat from this equipment. That heat needs to be removed to protect the equipment, so liquid cooling’s benefits are being explored as an important and required solution in data centers.

According to the Uptime Institute’s 2018 Global Data Center Survey:

- In 2018, about one-fifth of respondents said their highest-density rack was 30 kilowatts (kW) or higher, which suggests that density extremes in data centers are escalating.

- Less than 15% of respondents said they are using liquid cooling for their highest-density racks, but this percentage is still a significant increase from prior years and such adoption will continue.

A brief history of data center cooling

For the past 10-15 years, most legacy data centers were configured with server racks that held equipment with power densities of 3-5 kW per individual rack when full. Air-cooled systems — using peripheral cooling in conjunction with a chiller system and variable speed CRACs — had always met the demand of keeping the equipment cooled and operating.

The air-cooled configuration then developed into a hot aisle/cold aisle containment layout since rack power densities were now trending upward to 10 kW or more, and this configuration delivered significant energy savings over prior designs.

Most IT equipment takes in cold air via the front of the unit and then exhausts hot air from the back. In the hot aisle/cold aisle arrangement, the rows of server racks are oriented so that the server “fronts” face each other in one row and the server “backs” face each other in another row. Cold supply air is then delivered directly to each cold aisle and can be matched to the server airflow requirements for maximum efficiency.

But it is now 2018 and rack densities continue to increase. Many experts have suggested that when rack power densities approach 20 kW per rack, air-cooled systems have reached their maximum economic cooling capability.As more data centers aim to pack racks to capacity, liquid cooling becomes a more viable solution with capabilities by some estimates of 100 kW of cooling per rack. One type of liquid-based system pumps fluid through pipes around the equipment that needs to be cooled. This liquid absorbs the heat produced by the electronics and moves it away. Liquid cooling technologies are also improving with new advances such as immersion cooling in which cooling fluid is either submerged or circulated within the computer server or its components to remove the heat involved in increased power loads.

Liquid cooling is growing as an important technology and becoming mainstream

Likely the most important benefit of liquid cooling is its flexibility and scalability. It provides a targeted solution in raised-floor environments without requiring an overhaul of the entire infrastructure. A liquid-cooled system can reduce a data center facility’s overall power consumption and improve its power usage effectiveness (PUE), the de facto metric of infrastructure energy efficiency. This energy efficiency results in environmental benefits, including less power usage, reduced emission and overall less waste.

The growth in power density and its causes

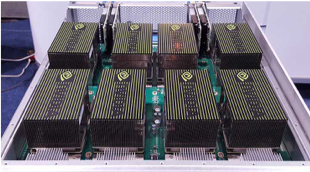

Consumer demands for faster and more complex services are the root cause for this growth. Artificial intelligence (AI) and super-computing applications such as gaming, 3D graphics and the Internet of Things (IoT) have spawned server equipment with a much higher cooling requirement. Graphics processing units (GPUs), which require a more robust specialized circuit than the central processing unit (CPU) of the typical server, are key to the expansion of all of these hyper-computing initiatives. Corporations like NVIDIA, which originally developed the GPUs primarily for gaming and 3D specialties, have continued to find ongoing uses for these GPUs.

However, the heat generated from GPU server hardware is nearly two-thirds higher than standard servers.

Challenges to data center developers, cloud companies and colocation operators

The major trend by enterprise companies to outsource all or most of their IT operations to third-party colocation and cloud servicers continues. More enterprise companies will demand solutions from these operators, so these hosting groups will need to address these demands, which may require significant upgrades to their cooling systems in an already very competitive environment.

Many hyperscale cloud operators, such as Alibaba, Google, Amazon, Apple, Baidu, Microsoft and Oracle are reportedly already investing in this technology since they need to address their high-performance computing (HPC) applications along with their AI customer demands.

Colocation operators that are also competing in this HPC sector and attracting hyperscale cloud clients, are allocating raised-floor areas dedicated to liquid cooling racks. Some suppliers offering liquid-cooled systems and racks include Asetek, CoolIT Systems and ScaleMatrix, while other players focused on the immersion cooling include Allied Control, Stulz and Green Revolution.

Conclusion

According to the HTF Market Intelligence’s “Data Center Liquid Cooling – Global Market Outlook (2017-2023),” the global market for liquid cooling of data centers was $820 million in 2016 and is forecasted to reach $4.55 billion by 2023 at a compounded annual growth rate of ~28% for the forecast period.

Close to half of a data center’s total energy use can go to keeping equipment properly cooled. Cooling this increased power workload can be a challenge for air-cooled systems. Liquid cooling offers numerous benefits that air cooling and other systems simply cannot.